The internet was originally imagined as a space where people could share ideas freely. Yet many users today feel that their voices are filtered, hidden, or suppressed by invisible systems. This growing concern has led to the emergence of a concept often discussed in digital culture: lobasincensura.

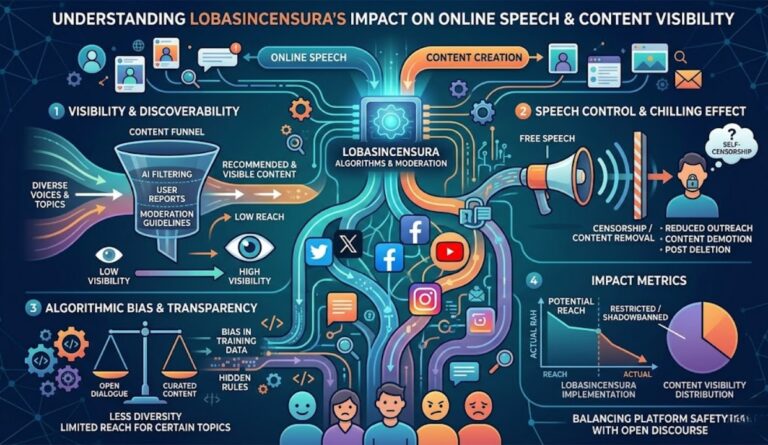

At its core, lobasincensura explores how modern digital platforms influence what people see, share, and discuss. It touches on complex topics such as algorithmic censorship, content moderation ethics, narrative control in online ecosystems, and the balance between freedom of expression and platform safety.

Understanding this concept is important for anyone who participates in digital communities—from casual users to creators, journalists, and policymakers. This article explains what lobasincensura means, how algorithmic moderation works, why platforms regulate speech, and what the future of online expression might look like.

Understanding Lobasincensura

Definition and Meaning

Lobasincensura refers to discussions about uncensored digital discourse and the perception that online speech may be shaped or restricted by algorithmic systems and platform policies.

In simple terms, it reflects concerns about:

- algorithmic visibility suppression

- information filtering algorithms

- content moderation systems

- narrative amplification or suppression online

These factors influence which posts appear in feeds, which topics trend, and which voices reach large audiences.

Difference Between Moderation and Censorship

Online platforms often distinguish between content moderation and censorship, although the line between them can sometimes feel unclear to users.

| Aspect | Content Moderation | Censorship |

|---|---|---|

| Purpose | Maintain platform safety and community standards | Restrict or suppress certain viewpoints |

| Control | Platforms enforce rules using moderation policies | Usually associated with government or authority control |

| Methods | AI moderation tools, reporting systems, policy enforcement | Removal, blocking, or suppression of content |

| Debate | Necessary for safe communities | Seen as limiting freedom of expression |

Most platforms claim they practice moderation, not censorship. However, debates arise when users believe algorithmic bias or platform governance decisions influence speech visibility.

Origins of the Concept in Digital Culture

The idea behind lobasincensura emerged as social media platforms grew more powerful. As companies developed sophisticated machine learning moderation systems and automated filtering pipelines, discussions around algorithm transparency and digital speech regulation intensified.

People began asking questions such as:

- Why do some posts suddenly disappear from feeds?

- What causes engagement drops without explanation?

- How do algorithms decide which voices gain visibility?

These questions form the foundation of modern debates about digital speech autonomy.

How Algorithms Shape Online Narratives

Modern social platforms rely heavily on algorithms to organize massive volumes of information. These systems determine what content users see based on engagement signals, relevance metrics, and safety filters.

Content Ranking Algorithms

Platforms use ranking algorithms to prioritize posts that are likely to generate engagement. These systems analyze factors such as:

- likes, comments, and shares

- user interests and interaction history

- topic relevance

- platform safety guidelines

While these systems improve user experience, they also influence narrative amplification systems, where some topics gain massive visibility while others remain unseen.

Algorithmic Visibility Signals

Algorithms rely on signals to determine whether content should be promoted or limited. These signals may include:

- engagement rates

- language patterns detected by natural language moderation models

- user reports and moderation flags

- policy violations

When a post triggers certain signals, it may experience algorithmic visibility suppression.

Engagement-Based Amplification

Content that sparks strong reactions often spreads faster. However, this can lead to algorithmic narrative prioritization, where sensational or emotionally charged content dominates discussions.

This dynamic can influence online discourse governance by shaping which conversations gain momentum.

Algorithmic Censorship Mechanisms

The perception of lobasincensura often stems from how automated moderation works behind the scenes.

Shadow Banning and Visibility Suppression

One commonly discussed mechanism is shadow banning, where content remains online but becomes harder for others to find.

Possible indicators include:

- sudden drops in reach or engagement

- disappearance from hashtag searches

- Reduced visibility in recommendation feeds

Although platforms rarely confirm shadow banning, many users associate these patterns with silent content suppression techniques.

Automated Moderation Pipelines

Modern moderation systems operate through complex pipelines that combine human oversight with artificial intelligence.

Typical moderation flow:

- Content is uploaded to the platform

- AI classification systems scan text, images, and video

- The system detects sensitive content or policy violations

- Content is flagged for review or automatically limited

These automated content filtering pipelines allow platforms to handle millions of posts daily.

Machine Learning Bias

Machine learning moderation bias can occur when training data reflects existing social biases. This may lead to:

- disproportionate moderation of certain topics

- inconsistent enforcement across communities

- algorithmic bias in moderation systems

As a result, discussions around moderation transparency and accountability continue to grow.

Also read: How Does Health Threetrees Com VN Improve Your Wellness Effectively

The Psychology Behind Self-Censorship Online

Another aspect of lobasincensura involves user behavior rather than platform technology.

Self-Censorship in Social Media Communities

Many users adapt their communication to avoid potential penalties or backlash.

Examples include:

- avoiding controversial topics

- modifying language to bypass filtering algorithms

- limiting personal opinions in public spaces

This phenomenon is often called self-censorship behavior.

Creator Fear of Platform Penalties

Content creators frequently depend on platform visibility for income and audience growth. When algorithms control reach, creators may feel pressure to adjust their content to fit platform norms.

This dynamic contributes to what researchers call creator adaptation to algorithmic moderation.

Impact on Digital Identity

When people adjust their speech to avoid algorithmic penalties, it may influence digital identity expression.

Over time, communities may adopt indirect communication styles or coded language to navigate moderation systems.

Platform Governance and Digital Speech Regulation

Large technology companies play a significant role in shaping digital speech ecosystems.

Platform Moderation Policies

Platforms such as Meta Platforms, Google, TikTok, X, and YouTube establish rules that determine acceptable content.

These policies address issues such as:

- misinformation

- harassment

- harmful or illegal content

- political advertising

While intended to protect users, policy enforcement sometimes sparks debates around digital rights activism and freedom of speech.

Global Speech Regulation

Governments also influence how platforms manage content through regulatory frameworks. These regulations may require companies to remove harmful content quickly or face legal consequences.

This raises important questions about platform liability debates and the role of private companies in regulating speech.

Ethical Challenges

Balancing open expression with community safety is complex. Key ethical questions include:

- Should algorithms decide which ideas are visible?

- How transparent should moderation systems be?

- Who defines harmful content?

These debates highlight the importance of platform governance transparency.

Technology Behind Content Moderation

Moderation systems rely on multiple technologies to detect problematic content.

AI Moderation Models

Artificial intelligence models scan text, audio, images, and videos for violations. These models rely on:

- natural language processing

- image recognition

- behavioral pattern detection

The goal is to identify harmful content before it spreads widely.

Natural Language Filtering Systems

Language models can detect certain phrases, sentiment patterns, or keywords that indicate policy violations.

However, automated systems may misinterpret context, sarcasm, or cultural language differences.

Video and Image Analysis

Platforms increasingly use visual recognition tools to identify prohibited imagery or harmful content. These systems analyze frames, objects, and metadata.

While powerful, these technologies still require human oversight to ensure fairness.

Societal Impact of Algorithmic Speech Control

The effects of algorithmic discourse filtering extend beyond individual users.

Journalism and Media Narratives

Algorithms can influence which news stories gain attention. This creates discussions about digital narrative suppression patterns and how information spreads online.

Political Discourse

Social platforms often serve as spaces for political debate. When algorithms influence visibility, they may indirectly affect public opinion and policy discussions.

Cultural Polarization

Algorithmic amplification systems sometimes promote content that triggers strong reactions, which may contribute to cultural polarization within digital communities.

Future of Uncensored Digital Communication

As awareness of lobasincensura grows, researchers and technologists are exploring alternative models for online communication.

Decentralized Social Platforms

Decentralized networks distribute control across multiple participants rather than a single company. This can reduce centralized moderation authority.

Blockchain-Based Content Governance

Some developers propose blockchain-based systems where moderation decisions are transparent and community-driven.

Transparency in Algorithmic Governance

Many experts argue that platforms should provide clearer explanations about how algorithms rank and moderate content.

Possible transparency measures include:

- publishing moderation statistics

- explaining algorithmic decision signals

- allowing independent audits of moderation systems

Such initiatives could help address the growing moderation trust deficit among users.

Also read: 7 Powerful Beauty Tips Well Health Organic Experts Swear By

Strategies for Navigating Algorithmic Moderation

Although platform algorithms remain complex, users and creators can adopt practical strategies to maintain visibility while following platform rules.

Optimize Content Visibility

Creators often improve visibility by focusing on:

- meaningful engagement rather than clickbait

- clear, context-rich communication

- compliance with platform guidelines

Adapt Communication Styles

Understanding how moderation systems operate can help users avoid unintended triggers.

Examples include:

- avoiding misleading headlines

- providing context for sensitive topics

- engaging respectfully in discussions

Support Transparency Efforts

Many digital rights organizations advocate for greater accountability in algorithmic governance. Supporting these efforts may help improve fairness across platforms.

Frequently Asked Questions About Lobasincensura

What does lobasincensura mean in digital culture?

Lobasincensura refers to discussions about uncensored online discourse and the belief that algorithms and moderation policies influence which content becomes visible or suppressed on digital platforms.

How do algorithms decide which content appears in feeds?

Algorithms analyze engagement signals, user interests, policy compliance, and relevance. Posts that perform well according to these signals are more likely to appear in feeds or recommendations.

Is algorithmic censorship real?

Most platforms describe their systems as moderation rather than censorship. However, users sometimes perceive invisible moderation signals or engagement throttling algorithms as forms of censorship.

Why do creators worry about shadow banning?

Creators rely on platform visibility to reach audiences. Sudden drops in reach or engagement can affect income, growth, and influence, leading to concerns about algorithmic suppression.

Can decentralized platforms prevent censorship?

Decentralized networks can reduce centralized control, but they still face challenges such as community moderation, misinformation control, and governance structure.

Conclusion

The concept of lobasincensura highlights a growing tension in the digital age: the balance between open expression and responsible moderation. As online platforms rely more heavily on algorithms and AI moderation tools, debates about narrative control, algorithmic bias, and transparency continue to evolve.

Understanding how content moderation systems operate can help users navigate digital environments more effectively. At the same time, ongoing discussions about algorithmic governance, decentralized communication models, and transparency initiatives will likely shape the future of online speech.

In the coming years, the challenge will not simply be removing harmful content but ensuring that digital platforms remain spaces where diverse voices can be heard without hidden barriers or unfair suppression.